Most conjoint studies don’t fail because of bad methodology. They fail because they don’t reflect how people actually make decisions.

On paper, the design looks solid—clean attributes, balanced levels, well-structured, and logically designed. But once results are applied in the real world, things don’t match. Products don’t perform as expected. Pricing misses the mark. Feature priorities feel off.

The gap isn’t technical. It’s behavioral.

Conjoint analysis is a research method used to understand how customers make trade-offs between features, pricing, and product options when making a decision.

If your conjoint study doesn’t simulate real-world decision-making, it won’t predict real-world outcomes.

Quick Summary

– Most conjoint studies fail due to unrealistic design

– Real accuracy comes from real decision context

– Fewer attributes improve data quality

– Choice tasks should mimic actual purchase situations

Why Conjoint Studies Fail

Traditional conjoint design focuses on structure:

- Define attributes

- Assign levels

- Build choice sets

- Run analysis

But real decisions are not made in structured environments. They’re influenced by:

- Context

- Constraints

- Competition

- Simple decision shortcuts (like choosing the cheapest or most familiar option)

When these elements are missing, respondents stop behaving like buyers and start behaving like survey participants.

This is exactly why designing a conjoint study that reflects real customer behavior becomes critical for getting reliable insights. For a deeper breakdown of why most conjoint studies fail, read this detailed guide.

This leads to:

- Overstated willingness to pay

- Unrealistic feature importance

- Inflated preference for your concept

The result looks precise—but it’s directionally wrong.

Design a Conjoint Study With Real Decisions

Before designing attributes or choice tasks, ask a more important question:

What does the actual decision look like in real life?

Consider:

- Where does the decision happen? (online, in-store, B2B negotiation)

- What alternatives exist? (direct competitors, substitutes, doing nothing)

- What constraints apply? (budget, time, availability)

Most studies skip this step. That’s where accuracy is lost.

A conjoint study is not just a survey—it’s a simulation of a real choice environment. If the environment is unrealistic, the output will be too.

Most modern studies use choice-based conjoint (CBC), which closely mimics real purchase decisions by asking respondents to choose between realistic options.

Choose the Right Conjoint Analysis Attributes

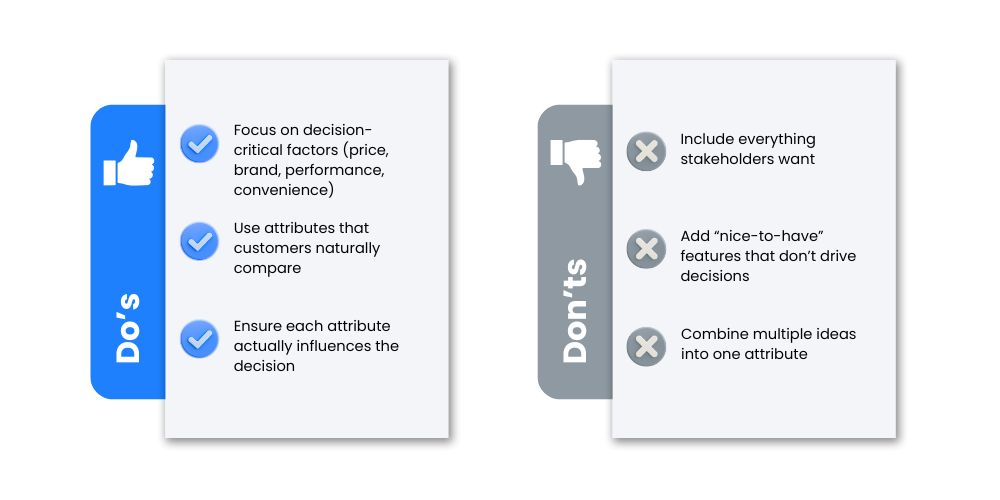

A common mistake is treating attributes as a checklist rather than decision drivers.

How Many Attributes:

- Use 5-10 attributes (but with the right approaches, more can be accommodated) – the most important is to ensure they are directly decision and simulation relevant

- Keep them distinct

What Makes a Good Attribute:

Attributes should reflect what people actively trade off in real decisions

Do’s & Don’ts:

What this means:

More attributes don’t improve accuracy. They reduce it.

When respondents face too many variables, they simplify decisions artificially—leading to unreliable data.

Set Realistic Levels in Conjoint Analysis

Even well-chosen attributes fail if levels don’t reflect real-world options.

Common Level Issues:

- Price ranges are too wide or too narrow

- Feature combinations that don’t exist in reality

This creates hypothetical bias—respondents evaluate scenarios they would never actually encounter.

Choosing Real Prices:

- Use realistic price points based on market data

- Avoid extreme or impossible combinations

- Reflect actual product configurations available today

Real vs. Unreal Examples:

Unrealistic:

- Product A: $5

- Product B: $500

Realistic:

- Product A: $49

- Product B: $59

Key takeaway:

If the levels don’t feel real, the decisions won’t be either.

Design Real Conjoint Choice Tasks

This is where most studies break down.

In real life:

- People don’t evaluate 10 options at once

- They don’t analyze every feature equally

- They often choose nothing

Your choice tasks should reflect that:

1. Keep options limited

- 3–5 choices per screen is optimal

- More than that creates fatigue

Most conjoint studies include 8–15 choice tasks per respondent to balance data quality and fatigue.

2. Always include a “None” option

- In reality, people can walk away

- Removing this forces artificial decisions

- The Analytics Team uses an advanced form of “None” inclusion called Dual-Response that ensures more accurate prediction of opting out

3. Maintain balance

- Ensure attributes and levels appear evenly

- Avoid patterns that bias selection

Why this matters:

If your study forces a decision that wouldn’t happen in real life, your results are biased from the start.

Add Competitive Context

One of the biggest mistakes in conjoint design is testing concepts in isolation.

In reality, customers are choosing between:

- Competing brands

- Substitute products

- Existing habits

What happens if you ignore competition:

- Your product looks better than it actually is

- Price sensitivity is underestimated

- Market share simulations become unreliable

Best practice:

- Include realistic competitor profiles

- Reflect actual market positioning

- Test within a competitive set

Key insight:

Customers don’t choose your product. They choose between options.

Common Conjoint Mistakes That Distort Results

Across all competitor content, the same issues appear—but rarely explained properly.

Here’s what actually goes wrong:

1. Too Many Attributes + Sub-par Techniques

Leads to cognitive overload → respondents simplify decisions → unreliable data

(The good news is that when working with The Analytics Team, we KNOW how to do complex conjoint)

2. Unrealistic Pricing

Creates false willingness to pay

3. No “None” Option

Forces choices → inflates preference

4. Ignoring Competition

Removes real-world context → overstates demand

5. Over-relying on Averages

Masks differences across segments → poor decision-making

6. Weak Differentiation

Confuses respondents → weak signals

From Data to Decisions: Interpreting Conjoint Results

Even a well-designed study can fail at the interpretation stage.

Conjoint outputs are not answers—they are inputs for decisions.

What to Focus On:

- Trade-offs between features

- Price sensitivity patterns

- Scenario simulations

What to Avoid:

- Treating utility scores as the absolute truth

- Ignoring segment-level differences

- Making decisions without testing scenarios

What this means:

The value of conjoint lies in simulation, not just measurement.

Before designing your study, explore when to use conjoint vs MaxDiff to choose the right approach for your research.

Validate With Real Behavior

Even with well-structured studies, data quality depends on having the right sample size. Typically, conjoint studies use 100 to 500 respondents, depending on complexity and segmentation needs. This is where most studies lose reliability, and the biggest mistakes happen. Conjoint measures stated preferences, not actual behavior. And the two are not always aligned.

To close the gap:

- Run pilot tests

- Compare with real sales or behavioral data

- Use conjoint as a directional tool, not a final answer

Why this matters:

If your results aren’t validated, they’re assumptions—just more structured ones.

Final Thought: Conjoint Is a Simulation, Not a Survey

The biggest shift you can make is this:

Stop thinking of conjoint as a survey design exercise.

Start treating it as a decision simulation problem.

When your study reflects:

- Real choices

- Real constraints

- Real alternatives

…it starts producing results that actually hold up in the market.

How The Analytics Team Works

We work with your full service or internal research team to add the analytics layer and expertise. We only do the analytic and directly related survey design elements. At The Analytics Team, conjoint design is approached as a behavioral modeling problem—not just a survey setup.

We focus on:

- Structuring studies around real decision environments

- Designing choice tasks that reflect actual behavior

- Ensuring outputs translate into clear, actionable decisions

If your conjoint study needs to reflect real customer behavior, the right design makes all the difference.

Start with a structure built around real decisions—not just survey logic. A short consultation can help clarify the right approach for your study.

Build Studies With the Right Foundation

If your conjoint study doesn’t reflect how customers actually choose, no level of analysis will fix it.

Start with behavior. Build around reality. Then design your study.

That’s what makes the difference between data that looks good and data that works.

Frequently Asked Questions

1. What is conjoint analysis used for?

Conjoint analysis is used to understand how customers make trade-offs between product features, pricing, and options, helping businesses design better products and pricing strategies.

2. How many attributes should a conjoint study have?

Most conjoint studies include 5–7 attributes to balance realism and avoid overwhelming respondents. With The Analytics Team, we have years of experience with complex conjoint, that is 10 or more attributes. We can handle all of your needs in this area.

3. How many choice tasks should a conjoint study include?

Typically, 8–15 choice tasks per respondent are used to ensure enough data without causing fatigue.

4. Should you include a “None” option in conjoint analysis?

Yes, including a “None” option reflects real-world behavior where customers can choose not to buy.

5. Why do conjoint studies sometimes fail?

Conjoint studies fail when they don’t reflect real decision conditions, such as unrealistic pricing, missing competition, or forced choices.